Measure Success with DORA Metrics Part 3: Build - Integrating AI

This is Part 3 of the Measure Success with DORA Metrics series. In Part 2, we covered the Plan phase and how work enters the system. In this post, we're looking at the Build phase. Specifically, how AI can reduce the dependency wall between development and platform teams that quietly kills delivery velocity.

Platform engineering became mainstream so fast that most organizations didn't have time to think about how it should actually work.

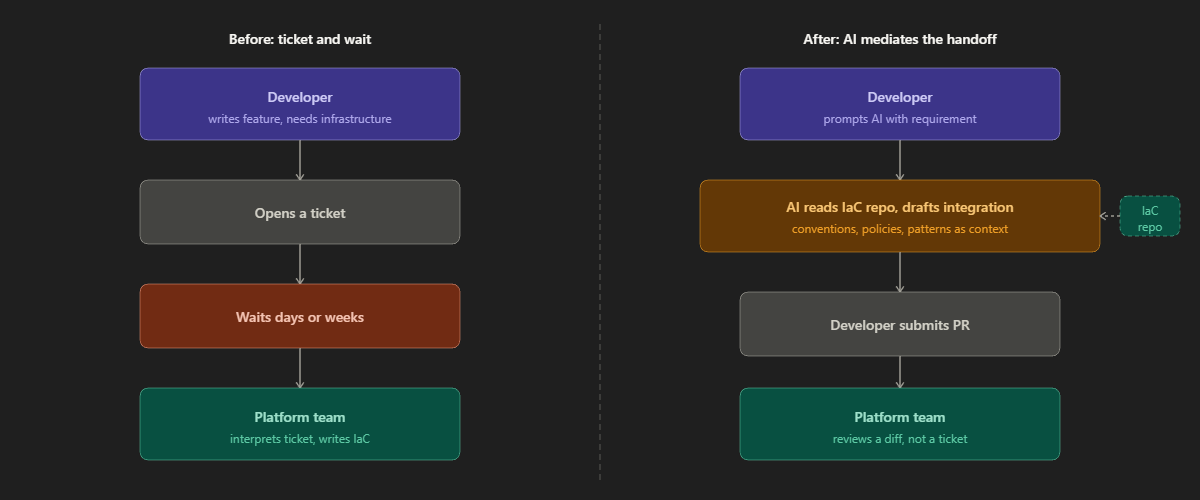

Teams were told to stand up a platform team, so they did. What they got was a new silo, slightly more sophisticated than the old SRE org, but still a bottleneck. Developers write code and then wait. They open a ticket. They wait for a platform engineer to provision something, wire up a new service, or extend the IaC to accommodate a new feature. The velocity gains promised by platform engineering don't materialize because the handoff model survived.

This is the dependency wall, and AI is the most practical tool we currently have to tear it down.

The Original Promise of Shift Left

Before platform engineering, the DevOps movement gave us shift left: the idea that you move operational responsibilities earlier in the SDLC, out of SRE and into development. Stop treating reliability, security, and infrastructure as downstream concerns. Build them in from the start.

The logic was sound. The execution was often messy. Developers didn't want to become ops engineers. SRE teams didn't want to give up control. You ended up with a cultural negotiation that consumed more energy than the actual work.

Platform engineering was supposed to solve this by giving teams a paved road: standardized, self-service infrastructure that development teams could consume without needing to understand everything underneath. The theory was that IaC would provide a consistent, version-controlled foundation aligning both teams around a shared configuration model, making infrastructure predictable, auditable, and easier to evolve collaboratively. (Source: controlmonkey.io, Platform Engineers vs DevOps: IaC in Focus 2025)

That's the theory. Most teams are still stuck at "open a ticket and wait."

What the 2025 DORA Research Actually Says

The 2025 DORA report is direct: there is a direct correlation between a high-quality internal platform and an organization's ability to unlock the value of AI. Not a nice-to-have. A prerequisite. (Source: dora.dev, 2025 State of AI-Assisted Software Development)

The research identified seven capabilities that amplify the benefits of AI in software development. Two are particularly relevant here. The first is AI-accessible internal data: connecting AI tools to internal documentation, codebases, and decision logs improves output quality and developer effectiveness. The second is quality internal platforms, because in organizations with quality internal platforms, AI's positive impact scales across the organization. (Source: Google Cloud Blog, Introducing DORA's inaugural AI Capabilities Model)

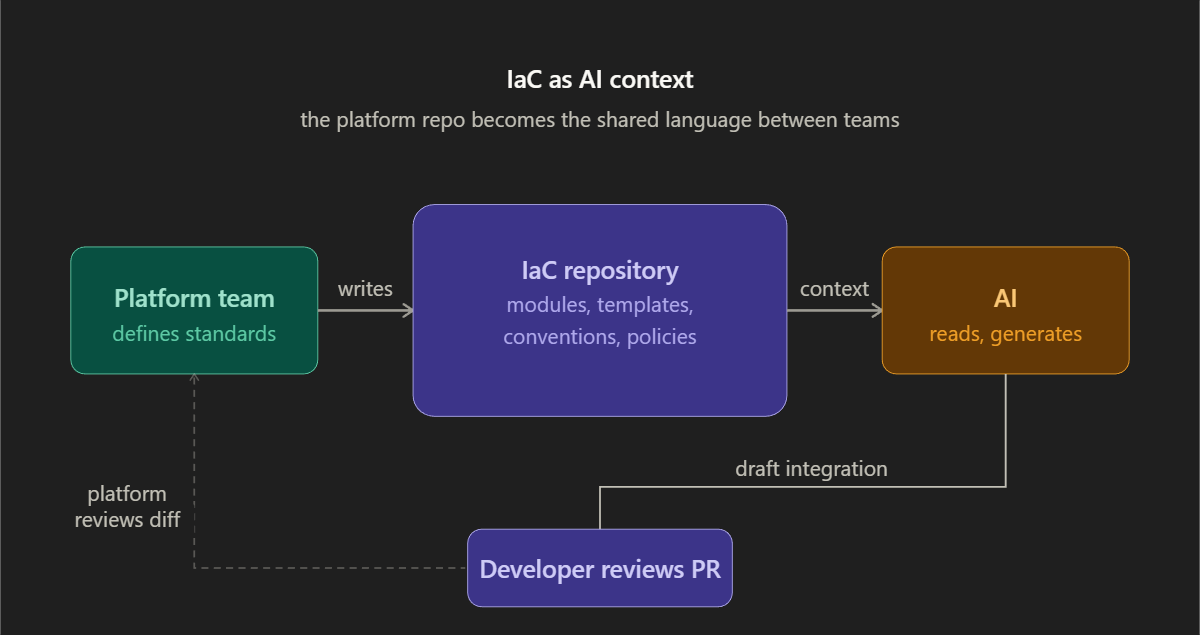

Read those together. The platform team's job is no longer just to build infrastructure. It's to make that infrastructure accessible to AI so that AI can make it accessible to developers. The platform becomes the interface.

The same research also confirms the risk: AI accelerates software development, but that acceleration exposes weaknesses downstream. Without robust control systems, strong automated testing, mature version control practices, and fast feedback loops, an increase in change volume leads to instability. (Source: Google Cloud Blog, Announcing the 2025 DORA Report)

This is the tension. AI makes developers faster. But faster in the wrong system means faster failures, larger batches, and more instability. The platform is the control layer that keeps speed safe.

The IaC Repository as AI Context

Here's a specific and practical proposal.

Your platform team maintains the IaC: environment configurations, network definitions, service templates, security policies, cost controls. That repository represents the organizational knowledge of how your infrastructure works. It's the platform team's entire output, codified.

Right now, when a development team needs to integrate a new service or feature into the platform, they open a ticket. A platform engineer reads the ticket, interprets the requirement, makes the IaC changes, opens a PR, waits for review, merges, and eventually responds to the developer. That cycle takes days, sometimes weeks.

The proposal is simple: make the IaC repository the primary context for your AI tooling. When a developer needs to provision a new resource, add a service, or configure a new environment, they prompt AI against the live IaC. AI reads the existing patterns, understands your conventions, and generates the integration. The developer reviews it, submits it as a pull request against the platform repository, and the platform team reviews a diff rather than a requirements ticket.

The platform team's review burden shifts from writing IaC to reviewing IaC. That's a much better use of their time and expertise. They move from producer to gatekeeper, which is what they should have been all along.

For this to work, a few things have to be true. The IaC has to be well-organized and consistently documented. Conventions have to be enforced. The repository structure has to be navigable. None of this is exotic. It's just good engineering discipline applied to the platform layer.

Other Places AI Eliminates the Handoff

The IaC pattern is the most immediate opportunity, but it isn't the only one.

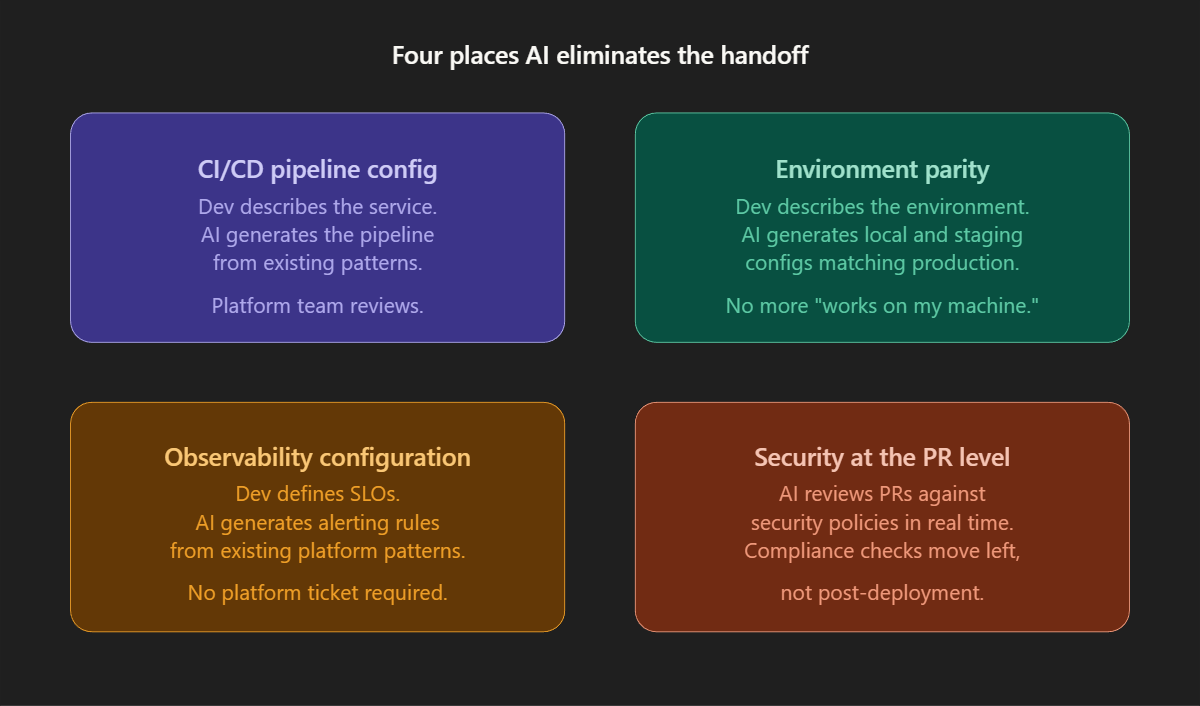

CI/CD pipeline configuration. When a team ships a new service, they need a pipeline. Someone has to write the pipeline definition: the build steps, test gates, artifact management, deployment targets. This is templated work that your platform team has solved a dozen times, but every new service still requires a human to adapt the template. AI with access to your existing pipeline configurations can generate the new one from a description of the service. The developer prompts, AI drafts, platform team reviews.

Environment parity. One of the most common sources of "works on my machine" failures is configuration drift between local, staging, and production. Developers should be able to describe the environment they need and have AI generate the local configuration and the corresponding staging spec, consistent with what production actually looks like. The platform team defines the production patterns once; AI propagates them down.

Observability configuration. Developers setting up a new service shouldn't need to ask a platform engineer how to wire up alerting. They should be able to describe the service's SLOs and have AI generate the alerting rules based on your existing observability patterns. AI is already being applied to analyze infrastructure failures, detect configuration drift, and identify security vulnerabilities in IaC definitions. (Source: platformengineering.com, How AI Is Reshaping Infrastructure at the Core of Platform Engineering)

Security and compliance checks at the PR level. Rather than a security review happening post-deployment or as a separate gate, AI can review pull requests against your security policies in real time. Platform engineering is moving toward injecting controls directly at the infrastructure layer, making non-compliant configurations not just discouraged but technically impossible. (Source: platformengineering.org, 10 Platform Engineering Predictions for 2026) AI gets you partway there without requiring months of policy-as-code implementation.

The Platform Team's Role Doesn't Disappear

None of this removes the need for platform engineers. It changes what they're doing.

Platform teams stop being the bottleneck for routine integrations and start being the architects of the standards that AI enforces. They define the modules. They establish the conventions. They write the documentation that gives AI the context it needs to generate correct output. They review the PRs that developers produce with AI assistance. They handle the complex, non-standard cases that AI gets wrong, and AI will get some cases wrong.

This is a better job. Platform engineers become the designers of a self-service system rather than the manual processors of individual requests.

The teams that will struggle are the ones with undocumented IaC, inconsistent conventions, and no investment in making their platform legible. You cannot give AI access to chaos and expect it to generate order. The 2025 DORA research puts it plainly: AI doesn't create organizational excellence, it amplifies what already exists. High-performing organizations with solid foundations use AI to accelerate. Dysfunctional systems use it to generate faster failures. (Source: IT Revolution, AI's Mirror Effect: How the 2025 DORA Report Reveals Your Organization's True Capabilities)

What This Means for Your Build Phase Metrics

Change lead time is the metric most directly affected. Every hour a developer spends waiting for a platform engineer to respond to a ticket is time the clock is running between code commit and production deployment. Removing that dependency, making infrastructure integration something a developer can do with AI assistance inside the same sprint, directly compresses lead time.

Deployment frequency benefits indirectly. When developers can integrate new features with the platform without blocking, small batches stay small. The temptation to bundle multiple features because "we're already waiting for platform anyway" disappears.

Change fail rate and deployment rework rate are where the discipline has to hold. AI-generated IaC needs the same review rigor as human-generated IaC, probably more, given that AI will confidently produce syntactically valid but semantically wrong configurations. The platform team's review role is not bureaucratic overhead. It's the quality gate that prevents a speed gain from becoming a stability liability.

The Practical Starting Point

If you're reading this and thinking "I want this," the path forward is not to buy a new tool. It starts with the platform team's repository.

Audit the IaC for consistency. Establish naming conventions if they don't exist. Add documentation to modules explaining their purpose and usage constraints. Organize the repository so that a developer, or an AI processing it as context, can understand what's there and how things connect.

Then run a pilot. Take one development team. Give them access to your AI tooling with the IaC repository as context. Have them try to provision something or wire up a new service. See where AI succeeds and where it fails. Use those failures to improve the documentation and structure of the repository.

Most of what you'll build in that process is engineering hygiene you should have had anyway.

Sources:

DORA 2025 State of AI-Assisted Software Development: dora.dev

DORA 2025 AI Capabilities Model: Google Cloud Blog

Announcing the 2025 DORA Report: Google Cloud Blog

AI's Mirror Effect: How the 2025 DORA Report Reveals Your Organization's True Capabilities: IT Revolution

How AI Is Reshaping Infrastructure at the Core of Platform Engineering: platformengineering.com

10 Platform Engineering Predictions for 2026: platformengineering.org

Platform Engineers vs DevOps: IaC in Focus 2025: controlmonkey.io